Leading Technologies, Innovative Products, Dedicated Employees

We invite you to take a video tour of our wafer fabs, operations facilities and design centers to see how we work together to meet our customer’s requirements.

Watch Our Videos:

MACOM is Expanding

The addition of the Wolfspeed RF Business’ engineering capabilities, portfolio of GaN products and process technologies and integrated design support position MACOM to better address out customer’s next generation RF challenges.

MACOM has established a European Semiconductor Center located near Paris in Limeil-Brévannes, France. The facility is dedicated to semiconductor technology development and fabrication of Monolithic Microwave Integrated Circuits (MMICs) to support the space, telecommunications and A&D markets and applications.

Combining MACOM’s high-performance microwave and optical semiconductor capabilities with Linear Communications Group’s module and subsystem design expertise strengthens our leadership position in mission critical communications across a wide range of applications and customers.

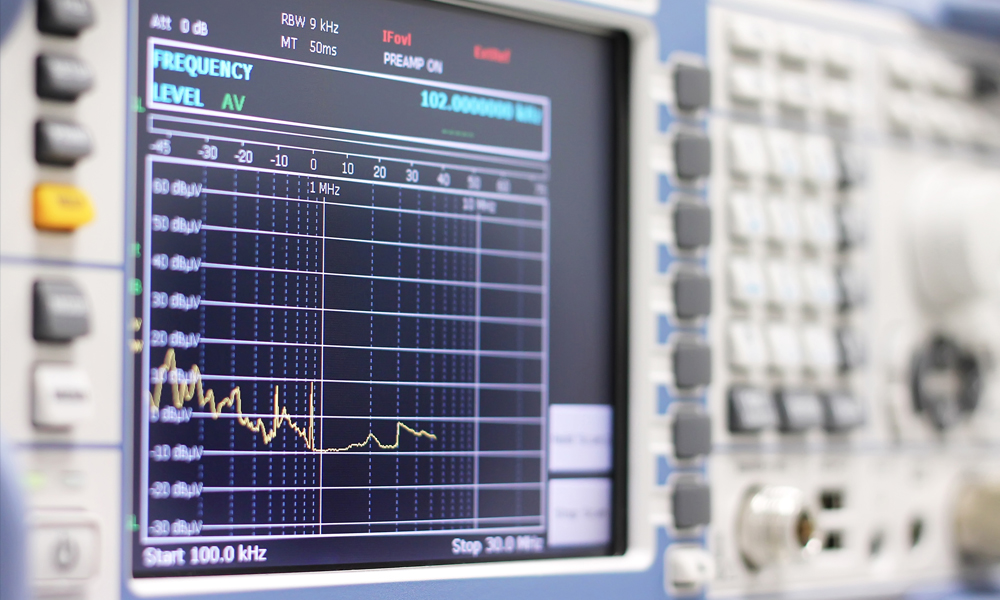

Linearization by Microwave Predistortion

Microwave Photonics Design

Space Based Amplifiers & Linearizers

MACOM Product Center

Pushing the limits for Higher Power, Higher Frequency, Higher levels of integration, and Higher data rates!

Every year we release to production over 150 new standard products and many more custom products! Check-back frequently to stay up to date on our IC design and semiconductor process technology updates.

Our product qualification and release process is robust and demanding to ensure the 1st and the 1 millionth product we deliver meets and exceeds our customers' expectations.

RF/Microwave & mmWave

Optical

Networking

-

Transimpedance Amplifiers - Client SideOctal 26 to 56 GBaud Linear Transimpedance AmplifierContact Us

Transimpedance Amplifiers - Client SideOctal 26 to 56 GBaud Linear Transimpedance AmplifierContact Us -

Supporting A Secure and More Connected World

We apply advanced circuit designs techniques, packaging solutions and semiconductor materials to the most demanding applications. Solutions from single IC to complex subassemblies manufactured with the tightest quality control.

.jpg?t=ri-2048.jpeg)

Our deep knowledge of high-speed signal processing, analog & mixed signal and digital design and optical semiconductors enables our customers to achieve the fastest possible connections inside and around the Data Center, whether NRZ, PAM-4, or Coherent.

Our ICs address a broad range of markets including 5G wireless communications, microwave radio links, satellite ground stations and broadcast video.

How Can We Help You?

Our customer application teams have decades of experience and are ready to support you today! We recognize our customers are under technical, schedule and cost pressures to execute and we are here to help. We can provide product application support, make recommendations on what IC may work the best in your application, help with your PCB design, all of which we hope saves you time to finish your design. Let us help you accelerate your time to market!

Submit your engineering, technical, general and application specific questions to our Support Team today.

Our technical staff in North America, Europe and Asia are ready to connect face to face, or virtually. Don’t hesitate to reach out!